Engaging Introduction

The landscape of artificial intelligence (AI) technology is evolving rapidly, driven by advancements in hardware, software, and an ever-growing demand for more efficient methods of data processing. In this competitive arena, two major contenders have emerged as leaders in the development of AI chips: Google and NVIDIA. Both companies are pushing the boundaries of what is possible in machine learning and AI applications, catering not only to technology enthusiasts but also to business owners looking to gain a competitive edge in their respective industries.

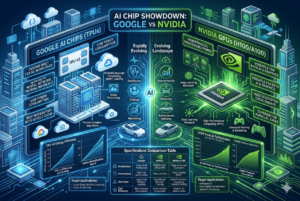

NVIDIA, with its long-standing expertise in graphics processing units (GPUs), has positioned itself as a powerhouse in AI computation. Their chips are recognized for their superior performance in parallel processing tasks, which are crucial for accelerating deep learning workloads. On the other hand, Google has introduced its Tensor Processing Units (TPUs), specialized chips designed to handle the specific demands of neural network training and inference, emphasizing energy efficiency and optimized performance for Google’s AI services.

As AI technologies permeate various sectors including healthcare, finance, and autonomous vehicles, the relevance of effective AI chips has surged. The choice between Google’s TPUs and NVIDIA’s GPUs is not merely a technical decision; it reflects a broader strategic consideration of how businesses wish to leverage AI capabilities to enhance operations, product development, and customer experiences. In light of this growing relevance, an in-depth comparison between these two giants is warranted.

This blog post aims to dissect the intricate details of Google AI chips and NVIDIA’s offerings, exploring their architectures, performance metrics, and practical applications. By understanding the strengths and weaknesses of each option, businesses can make informed decisions that align with their technological needs and aspirations in a rapidly advancing digital landscape.

Key Points Box

In the evolving landscape of artificial intelligence (AI), both Google and NVIDIA have made substantial investments in developing specialized chips to optimize AI processing. The following key points summarize the comparisons, advantages, and insights related to Google AI chips and NVIDIA:

- Architecture and Design: Google AI chips, specifically the Tensor Processing Units (TPUs), are designed for high throughput and efficiency in deep learning tasks, while NVIDIA’s Graphics Processing Units (GPUs) offer versatile capabilities suitable for both graphical applications and parallel processing tasks.

- Performance: In benchmark tests, NVIDIA GPUs often demonstrate superior performance in general AI tasks due to their established ecosystem and software compatibility. On the other hand, Google’s TPUs excel in specific workloads, especially in neural network training tailored for their software stack.

- Integration and Ecosystem: Google AI chips are tightly integrated into Google’s cloud services, facilitating easy access for developers within the Google Cloud Platform. Conversely, NVIDIA provides a broader range of hardware options, making it suitable for diverse AI applications outside of cloud environments.

- Cost Efficiency: For organizations heavily invested in cloud computing, Google’s pricing model for TPUs can provide significant cost savings. Meanwhile, NVIDIA’s extensive market presence may lead to competitive pricing but typically involves higher upfront costs tied to hardware acquisition.

- Flexibility and Use Cases: NVIDIA GPUs are favored for graphical simulations, gaming, and complex modeling, showcasing their flexibility across multiple industries. In contrast, Google’s TPUs are uniquely tailored for large-scale machine learning tasks, particularly in applications like speech recognition and image processing.

- Future Prospects: Looking ahead, Google continues to enhance its TPU capabilities focusing on integration with other AI tools, while NVIDIA is expanding its influence through innovations like AI research and development initiatives.

Understanding these key differences helps companies make informed decisions when selecting between Google AI chips and NVIDIA products for their specific AI needs.

Performance Comparison: Google vs NVIDIA

When comparing the performance of Google AI chips to NVIDIA chips, it is essential to consider various metrics such as processing power, speed, and energy efficiency. In recent evaluations, Google’s TPUs (Tensor Processing Units) have shown remarkable capabilities, primarily designed for machine learning tasks. As of 2026, the latest TPU version, TPU v4, is reported to deliver around 420 teraflops for training deep learning models, eclipsing many of its competitors in specific applications.

On the other hand, NVIDIA’s A100 and H100 GPUs, particularly tailored for AI workloads, also showcase impressive performance. The A100 can achieve a peak performance of about 312 teraflops for FP16 training, while the H100 pushes this even further, offering up to 704 teraflops under ideal conditions. Both types of chips exhibit a strong capability for parallel processing, which is crucial for AI computations.

Speed and efficiency are equally important in this comparison. Google has optimized its TPUs to operate within a highly specialized framework, allowing for impressive performance while maintaining low power consumption. Reportedly, the power efficiency of the TPU v4 stands at approximately 35 teraflops per watt, whereas NVIDIA’s H100 achieves around 30 teraflops per watt. These metrics suggest that while both processors excel in performance, Google’s chips may provide slightly better efficiency in specific deep learning tasks.

Benchmarks indicate differing performance outcomes based on the workload. For instance, when handling extensive natural language processing tasks, Google’s TPUs can outperform NVIDIA’s GPUs due to enhanced optimization for such scenarios. Conversely, in graphical rendering tasks, NVIDIA’s architecture often leads the charge, highlighting the distinct advantages of each chip set based on their intended use.

Strengths and Weaknesses

When examining the landscape of AI processing, both Google and NVIDIA present technologies that cater to different needs and preferences in the realm of artificial intelligence. Google’s AI chips are known for their highly specialized design, which is primarily focused on machine learning tasks. The Tensor Processing Units (TPUs) developed by Google have been optimized for tasks such as deep learning. This specialization allows for impressive performance with lower power consumption, making them advantageous for large-scale, energy-efficient operations.

One of the standout strengths of Google’s TPUs is their seamless integration with Google Cloud services. This synergy not only maximizes performance but also simplifies deployment for users who are invested in the Google ecosystem. Additionally, TPUs excel in performing vast matrix multiplications and handling tensor operations, which are crucial in deep learning, thereby resulting in significantly faster training times compared to conventional CPUs.

Conversely, NVIDIA’s GPUs have entrenched themselves as a staple in both gaming and AI. Their architecture supports a diverse range of applications, not limited solely to AI, making them highly versatile. With the capability to process parallel tasks efficiently, NVIDIA’s graphics processing units are favored in scenarios requiring significant computational power. Notably, NVIDIA’s CUDA platform offers a robust environment that allows developers to leverage the immense parallel computing capabilities of their GPUs, giving them an edge in flexibility.

However, a potential weakness for Google is that its TPUs may lack the versatility that NVIDIA’s technology offers. While TPUs are extraordinary in specific AI applications, they may not perform as well in other general-purpose scenarios compared to the more adaptive performance of NVIDIA’s GPUs. Lastly, the accessibility of development tools and support from NVIDIA can be considered a significant advantage, particularly for new developers entering the field of AI.

Market Share and Industry Impact

The AI chip market has rapidly evolved over recent years, with key players such as NVIDIA and Google making significant strides. Currently, NVIDIA holds a dominant share of the market, largely attributed to its advanced graphics processing units (GPUs) that are integral to various AI applications, from deep learning to autonomous vehicles. As of 2023, NVIDIA’s market share is estimated to be over 85% in the discrete GPU sector. This commanding position underscores its pivotal role in the advancement of AI technologies.

In contrast, Google’s AI chips, particularly the Tensor Processing Units (TPUs), have carved out a niche in the industry, especially within cloud-based services. While their overall market share remains smaller than NVIDIA’s, they are increasingly attractive to businesses that rely on Google’s extensive cloud platform. Google’s focus on optimizing TPUs for machine learning applications highlights its strategy to position itself as a leader in AI computation for enterprise solutions.

The implications of these figures are noteworthy for businesses and technology users. Organizations leaning heavily on AI methodologies may gravitate towards NVIDIA’s GPUs for performance-driven tasks, while companies working within Google’s ecosystem may find TPUs advantageous for cost-effective and scalable solutions. This distinction in market share and product focus reveals a bifurcation in the industry, where businesses must carefully consider their unique needs and technical requirements when selecting hardware.

Looking ahead, trends indicate a potential shift in AI chip adoption. As the demand for energy-efficient and specialized processors grows, there is a likelihood that Google may increase its market share, particularly if it continues to innovate and extend the application of its chips across different industries. Moreover, emerging players are likely to disrupt the established market dynamics, hinting at a continually evolving landscape for AI technologies.

Use Cases and Applications

The advancement of AI technologies has led to the development of numerous applications in various sectors. Google AI chips and NVIDIA GPUs play significant roles in this transformation, driving innovation across industries such as business, healthcare, and automotive.

In the business sector, organizations are leveraging Google AI chips for improved data analysis and operational efficiencies. For instance, Google Cloud’s AI services utilize Tensor Processing Units (TPUs) to enable companies to process vast amounts of data efficiently, thereby enhancing their decision-making processes. As a result, businesses can gain valuable insights from data patterns that were previously undetectable, leading to better strategic outcomes.

In the healthcare industry, both Google and NVIDIA have made substantial contributions. For example, NVIDIA’s GPUs are widely used in medical imaging, where deep learning algorithms analyze images for diagnostic purposes. This technology empowers healthcare professionals to detect conditions such as tumors or fractures with heightened accuracy and speed. Additionally, Google AI chips are utilized in predictive analytics to forecast patient needs and optimize treatment plans, improving patient care outcomes significantly.

Within the automotive sector, AI chips from both companies are central to the development of autonomous driving technology. NVIDIA’s DRIVE platform harnesses the power of its GPUs to process real-time data from sensors and cameras, enabling vehicles to navigate and make decisions autonomously. Likewise, Google’s chips are involved in enhancing machine learning models that predict driving conditions and manage in-vehicle systems, resulting in a safer driving experience.

In summary, both Google AI chips and NVIDIA GPUs are instrumental in driving innovations across various sectors. From enhancing business efficiencies to advancing healthcare solutions and facilitating the development of autonomous vehicles, these technologies demonstrate their extensive applicability in real-world scenarios. As industries continue to evolve, the integration of these AI technologies is likely to grow, leading to further advancements and efficiencies.

Visual Comparisons

In the ever-evolving landscape of artificial intelligence, visual comparisons play a pivotal role in helping potential buyers discern the differences between Google AI Chips and NVIDIA products. To aid in this comparison, a table is provided, showcasing key specifications and performance metrics of both brands.

Specifications Comparison Table:

| Feature | Google AI Chips | NVIDIA |

|---|---|---|

| Architecture | Tensor Processing Unit (TPU) | Graphics Processing Unit (GPU) |

| Performance | High Efficiency for ML Tasks | Exceptional in Graphics Rendering |

| Target Use Cases | Data Centers, Cloud Services | Gaming, Research, Deep Learning |

| Price Range | Competitive Pricing | Varies Widely from Budget to High-End |

When considering performance, it is essential to identify the intended application. Google AI Chips excel in machine learning tasks, offering distinct advantages in environments such as data centers and cloud services. Conversely, NVIDIA tends to dominate in graphics rendering, making it a preferred choice for gaming and visual effects.

Pro Tip: Before making a purchase, consider the primary functionalities you need. Analyze whether your applications lean towards AI computations or require high-fidelity graphical outputs. Review the specifications in depth to ensure that the selected chip aligns with your intended use.

By leveraging visual aids like comparison tables and infographics, potential buyers can make informed decisions that cater to their specific requirements, ensuring optimal performance and value for their investment.

Frequently Asked Questions (FAQs)

As the demand for artificial intelligence (AI) technologies continues to surge, many individuals and businesses find themselves opting between Google’s AI chips and NVIDIA’s renowned offerings. This section addresses common queries that often arise regarding the performance, market statistics, and unique features of these competing technologies.

One of the primary inquiries involves the performance differences between Google AI chips and NVIDIA GPUs. Google AI chips, particularly the Tensor Processing Units (TPUs), are designed primarily for tensor computations which enhances performance for machine learning tasks. Conversely, NVIDIA’s GPUs are extremely versatile, excelling in a range of graphical tasks while also proving efficient for AI applications. In real-world applications, benchmarks show that both options hold advantages in different scenarios; hence, the choice largely depends on the specific use case.

Market statistics indicate a growing competition between these chips. As of recent data, NVIDIA has captured a significant share of the AI hardware market due to its early investments in deep learning and neural networks. However, Google’s advancements with TPUs, especially tailored for cloud-based AI services, are rapidly gaining traction. This emerging rivalry suggests that both companies recognize the need for continuous innovation, responding dynamically to market demands.

Specific features are also a frequent point of interest. Google’s AI chips boast capabilities like high throughput and reduced latency for specific machine learning tasks, making them optimal for certain applications. NVIDIA, on the other hand, provides CUDA support which allows developers to leverage its vast ecosystem of development tools, creating a strong appeal for those looking for flexibility in AI development. Each offering clearly demonstrates unique strengths, and thus, the ideal choice is contingent upon the particular requirements of the user or organization.

Conclusion and Call to Action

In the evolving landscape of artificial intelligence (AI), the performance and suitability of AI chips can significantly influence the outcomes of various applications. This comparison between Google AI chips and NVIDIA’s graphics processing units (GPUs) illuminates the strengths and weaknesses of each. Google’s Tensor Processing Units (TPUs) are custom-built to accelerate machine learning tasks, offering exceptional efficiency for large-scale data processing. In contrast, NVIDIA’s GPUs excel in versatility, catering to a wide array of applications, from graphics rendering to deep learning.

Both chipsets offer unique benefits based on specific use cases. For businesses primarily focused on deep learning and neural network training, selecting a Google AI chip could lead to optimized performance and reduced operational costs. On the other hand, organizations requiring flexibility across different workloads may find NVIDIA’s offerings more advantageous, allowing them to leverage the broad spectrum of supported software and frameworks.

As enterprises and developers continue to explore AI technologies, understanding the distinct features of these chips is crucial. A well-informed decision regarding which AI chip to implement can greatly enhance computational capabilities and operational efficiency. Therefore, it is imperative for organizations to assess their unique needs, taking into account factors such as workload types, budget constraints, and scalability requirements.

We encourage readers to stay informed about the latest advancements in AI chip development, as technological evolution in this space continues to influence various sectors. Whether you are a developer, a business leader, or an enthusiast, considering your next steps in integrating AI technology is vital in staying ahead of the curve. Engage with the community, experiment with different platforms, and contribute to the ongoing discourse surrounding AI innovation.